Consider 2 webpages, both deployed on the same website (say http://www.rishabhsingla.com/ ), having similar file names (index1.html and index2.html).

The content of index1.html is as follows

The Web of today gives us for free, much of the content that people used to pay for sometime back. While on one hand we have online videos to watch, we can read regularly updated news, and even have encyclopedic content to consume - all this for free! Even the portals providing a regularly updated view of this content are free.

The Web doesn't provide us with just free "content". It also gives us free services and tools. Users can engage into social networking, send and receive email, enjoy chatting with their buddies - by text or even by voice or video, search for images or photos, look for interesting blog posts, write blogs of their own, and can even use office productivity applications - once again, all this for free!

If all these free goodies were not enough, even the applications used to access the Web are available for free. We have secure and capable Web browsers, and feature-rich toolbars which add useful functionality to these browsers.

Clearly, the Web saves people a lot of money!

The content of index2.html is as follows (not everything is same)

The Web of today gives us for free, much of the content that people used to pay for sometime back. While on one hand we have online videos to watch, we can read regularly updated news, and even have encyclopedic content to consume - all this for free! Even the portals providing a regularly updated view of this content are free.

The Web doesn't provide us with just free "content". It also gives us free services and tools. Users can engage into social networking, send and receive email, enjoy chatting with their buddies - by text or even by voice or video, search for images or photos, look for interesting blog posts, write blogs of their own, and can even use office productivity applications - once again, all this for free!

If all these free goodies were not enough, even the applications used to access the Web are available for free. We have secure and capable Web browsers, and feature-rich toolbars which add useful functionality to these browsers.

Clearly, the Web saves people a lot of money!

You would've noticed by now that everything is same for these 2 webpages, apart from the properties to which they point. index1.html points to various Google properties, while index2.html points to non-Google properties.

Google's PageRank, as much information about it is publicly known, doesn't factor outbound links to calculate the rank of a webpage. But that's publicly available information!

The question I ask is - will the rank of index1.html and index2.html be exactly the same (considering everything about them, except their file names and outbound links, is identical)? I have my share of doubts. It's in Google's interest to promote webpages which point to Google properties, so that visitors to those webpages have a higher chance of coming the Google properties which have been pointed to. And currently there is no way to ensure that Google is not engaging into malpractices. The underlying reason behind my doubt is that Google is both a gateway to the Web (both Google and non-Google properties), as well as a provider of some of what constitutes the Web. It helps Google if it can promote third-party pages pointing to Google properties, without letting anyone feel this.

This post echoes another concern of mine - Google promoting webpages with AdSense deployed on them, over those which either have no contextual advertising system deployed, or have a system deployed from one of Google's rivals. Read about my concern here

P.S. I composed the short essay used as the content of index1.html and index2.html

About Me

Friday, December 19, 2008

Thursday, December 18, 2008

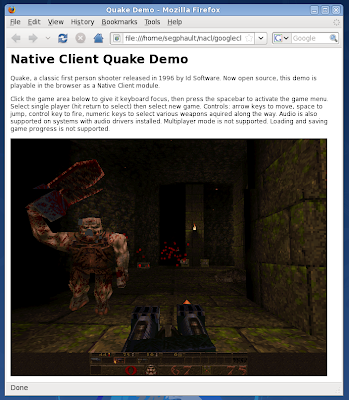

PREDICTION: Google's Native Client Technology Is A Game Changer

No matter what benefits accrue from the use of a Web browser for running Web applications (platform independence, sandboxed execution, etc.), fact remains that a Web browser is yet another abstraction layer, and adds to the execution inefficiency. The Web code runs one layer higher, wasting precious CPU cycles, and consuming more memory. The speed lag may be less pronounced on powerful modern desktop systems (in part because Web applications have so far been put to relatively "light" uses), but imagine running the full version of Gmail or Google Docs on even a relatively powerful mobile device such as the iPhone (inside Safari browser) at an acceptable speed - it's laughable...

And so since many months, I was wondering - why doesn't Adobe / Google / Mozilla or a startup develop a product (either a complete platform by itself or a browser plugin) that allows "sandboxed execution of native code".

I'm delighted to see Native Client, Google's project that does just the same thing - sandboxed execution of x86 application code inside a Web browser. What's more, the characteristics we typically expect from browser-based applications - browser-neutrality, OS-independence and security - are preserved. Bravo Google! I see this project (and also JavaScript engines such as V8, that compile JS code to native-code) as game changers (albeit I believe it will take at least a year before Native Client gets a decent amount of traction, and at least 2-3 years before it starts getting widespread adoption).

My personal feeling is that this is a nail in the coffin of desktop applications the way we've traditionally known them. This makes it possible to securely run the full Photoshop inside a browser. And this is the technology (or a derivative thereof) that will eventually subvert / supercede the myriad of technologies fighting for domination as the choice for applications served from the Web (Web browsers, Adobe Flash, Microsoft Silverlight, Adobe Integrated Runtime, Sun Microsystems JRE/JavaFX, Mozilla Prism, etc.). To be fair, it's not the first time that native code is being run in a browser - that credit deservedly goes to Microsoft, whose ActiveX has long had this ability, albeit infamously insecurely (there is a reasonable probability that ActiveX can get a second life, if Microsoft evolves the technology to make it more secure). By creating a viable application platform layer at the browser level, Google further undermines the role of the operating system as a software platform, unlocking the long-held hold of Microsoft and Apple on their respective software ecosystems. Over the long term, NaCl poses a serious threat to these now-popular operating systems.

Of course, Native Client is still at a fetus stage. It will progress in both evolutionary and revolutionary ways, and my belief that it will be a game changer factors in this expected evolution. Native Client has the essential quality any would-be contender as the dominant software platform must possess - cross-platform and cross-browser support (support on mobile platforms such as Android should follow in some time). Looking at it from an inverted perspective, I see no reason why Native Client shouldn't succeed. Finally, I see Native Client getting bundled with Chrome down the road (the way it has been with Gears).

Chrome (secure architecture + inbuilt search) + Gears (offline) + Native Client (heavy duty functionality + speed) = A fulfilling user experience for various types of applications and content.

I'm wonder why this project didn't get as massive coverage in press as I believe it deserves... Does my post on journalism provide some indirect (*cough*) explanation (read ranting...)

Sunday, December 14, 2008

Human brain's information-retrieval system is imperfect (apparently)

This post is in continuation to my previous post (Human brain could be storing & retrieving information as 'related blocks').

About a year back, I was at home and me and my sister were watching a program on TV. A character appeared on TV, which I felt I've seen before. I started trying to recall his name, but couldn't. My sister knew the name, and after watching me trying to recollect the name for about 5 minutes, she finally spoke out the name, and I exclaimed "Yes! This is his name!".

Immediately I realized something. To be able to confidently say that "Yes, this is the name", I must have compared the name that my sister spoke out to the name that was already residing in my memory. After all, it's impossible for me to claim that the name which my sister spoke is his name, unless I already have a full copy of that name in my brain, to compare with.

This leads me to two things:-

About a year back, I was at home and me and my sister were watching a program on TV. A character appeared on TV, which I felt I've seen before. I started trying to recall his name, but couldn't. My sister knew the name, and after watching me trying to recollect the name for about 5 minutes, she finally spoke out the name, and I exclaimed "Yes! This is his name!".

Immediately I realized something. To be able to confidently say that "Yes, this is the name", I must have compared the name that my sister spoke out to the name that was already residing in my memory. After all, it's impossible for me to claim that the name which my sister spoke is his name, unless I already have a full copy of that name in my brain, to compare with.

This leads me to two things:-

- Although my brain's information storage system had successfully stored the name, the information retrieval system was unable to read it

- Our brains have much more information stored inside, than we know. The inability of information retrieval system to retrieve all that information doesn't mean that tons of information isn't present

- Wear and tear over time, leading to partial damage to the information retrieval system

- Inherent shortcomings in the system's design

- My focus was on some other task, and so the retrieval system wasn't focused on the right block (to better understand this point, read this - Human brain could be storing & retrieving information as 'related blocks'

- Excessive amount of information had been stored in the brain, and the retrieval system either found it difficult to retrieve information (pointing to a design flaw in the retrieval system, or its inability to scale), or the retrieval process required more time (there's no flaw in the design of retrieval system, but the time needed to retrieve information is proportional to the amount to information stored)

- Improving hardware and algorithms of brain using genetic engineering

- Connecting the brain to external equipment to copy information stored in it onto a computer, and retrieving it from there

Sunday, November 16, 2008

Inconsistency Between Results Of Google Search And Suggestions Of Google Suggest

When I type apple developer connection into the Google search box, it returns http://developer.apple.com/ as the top result (and I'm Feeling Lucky button takes one to this URL). However, when I type the same query into the address/location bar of Google Chrome, the inbuilt Google Suggest feature shows me http://developer.apple.com/iphone/ as the suggested URL.

The joy of using Google and Chrome gets marred due to this inconsistency. I've been using Google for years now, and I'm used to typing certain queries, only to expect certain results at fixed positions in the SERP that Google returns. I'm also quite used to the Browse By Name feature in Firefox, and expect that if a particular query typed into the location bar of Firefox takes me to a specific URL (because of the Browse By Name feature), then the same query typed into the location bar of Chrome should return the same URL as a suggestion.

This, after all, makes perfect sense!

The screenshots below show the inconsistency:-

I just noticed that all the visible bookmarks in the Bookmarks Toolbar of my install of Chrome point to Google properties! A sign of things to come?

I just noticed that all the visible bookmarks in the Bookmarks Toolbar of my install of Chrome point to Google properties! A sign of things to come?

The joy of using Google and Chrome gets marred due to this inconsistency. I've been using Google for years now, and I'm used to typing certain queries, only to expect certain results at fixed positions in the SERP that Google returns. I'm also quite used to the Browse By Name feature in Firefox, and expect that if a particular query typed into the location bar of Firefox takes me to a specific URL (because of the Browse By Name feature), then the same query typed into the location bar of Chrome should return the same URL as a suggestion.

This, after all, makes perfect sense!

The screenshots below show the inconsistency:-

I just noticed that all the visible bookmarks in the Bookmarks Toolbar of my install of Chrome point to Google properties! A sign of things to come?

I just noticed that all the visible bookmarks in the Bookmarks Toolbar of my install of Chrome point to Google properties! A sign of things to come?

Saturday, October 11, 2008

The Blog Invasion - A Drop In Journalism Quality At CNET News

It's a little silly that I'm going point this out in a blog post. I'm feeling increasingly sick of the unusually large number of blog posts coming up on some of my (till recently) favorite and most-read news websites - such as CNET News.

Update (18-12-08): A recent story on The Wall Street Journal claims that Google's recent actions are an indicator of a reversal of its previous stance on Net Neutrality. Once again, it's a confused, ignorant and misinformed journalist - making premature conclusions and judgments - to blame. With apparently no fundamental knowledge or understanding of computer science, computer networks, cache, content delivery network, and edge computing, the WSJ journalist is making hyperbolic claims which indicate his state of confusion, ignorance and misinformation. I completely agree with Google's visibly enraged response blasting this story on The Journal.

There was time some years back when I would daily go to CNET News (it was located at http://news.com.com/ back then) and would find a dozen or more fresh and well written news stories, free from immature and misinformed personal opinions and also both enjoyable and insightful. The advent of blogs on the Web, initially by independent individuals on third-party services such as Blogger, and later on News Websites started the trend of what CNET now calls a News Blog. Initially, these News Blogs took up only a small proportion of the total number of stories published by CNET News. Slowly and slowly, however, the proportion of these blogs grew, till a day came when News Blogs finally outnumbered News Stories on the homepage of CNET News. And this, in my opinion, was an unfortunate event, not just for CNET News (and its discerning readers), but for Web-based journalism as a whole (I see similar trend on some other websites such as Wired).

The final blow came when CNET stopped marking these News Blogs with a large and clearly-visible News.Blog banner, and gave all types of stories a unified http://news.cnet.com/ domain (previously all these News.Blog posts had a separate Internet sub-domain). Together, these 2 changes ensure that not only does one not know before clicking on a link pointing to CNET News (Say, from Google News) that it points to a News Blog and not a news story, but worse, one can't always be sure that one is reading a blog post even after having landed on the page. Additionally, news aggregators such as Google News are mistakenly including these News Blogs in the news stories they include, when the correct place for such posts is the newly revamped Google Blog Search (now in Google News format). One of Google's goals is to organize the world's information and make it universally accessible and useful, and an important step in this direction will be to separate indisputable and reliable facts from disputable and unreliable personal opinions. My reasoning for this is that there is a clear line of distinction between News Stories and Blog Posts, as outlined below:-

- A News Story: Should present pure and unbiased facts (as they happened), and only pure and unbiased facts

- A Blog Post: Should present pure and unbiased facts (if it presents them at all, something not required of a blog post), but can additionally add personal opinions (which sure can be biased, provided reasonable and sufficient attempt is made to ensure that the reader is made aware that he is reading a blog post and not a news story, as well as implications of the same)

The issue I have with CNET News is that it labels and markets itself as a News Website, whereas with News Blogs generally outnumbering News Stories on its homepage, it should ideally be branded as CNET News Blogs, thus reflecting the disproportionate share of blog posts. Readers at large should not be tricked into believing that they're visiting a News Website when in reality they are being given a heavy dose of personal opinions, instead of facts and logical analysis.

Which brings me to the pathetic, and often hilarious News Blogs that many (most?) journalists write. With apparently no real understanding of the underlying business models or technologies, many journalists are dishing out "analysis", "opinions" and hilariously, even "forecasts" and "predictions" about brands, products and segments in the technology sector. Just look at this story and I bet you'll either laugh holding your tummy or get to the verge of crying. The hopelessly pathetic nature of this unusually immature post can be effortlessly judged from the expectedly large number of reader comments it has accrued (which, by the way, are way more correct and enjoyable to read than the story itself - maybe it's Computerworld's secret futuristic 2025 AD strategy of making readers themselves create great content for Computerworld for free, by Computerworld putting up a post full of the material ejected from south end of a cow, thus triggering a surge of corrections and fresh inputs from infuriated readers).

Not only is the correctness of journalism questionable at these so-called News Blogs (I can't digest this term- How can something be both News and Blog?), the professionalism of language used is questionable as well. Look at this story on CNET News. Comparing it to the flavor I get on The New York Times and The Wall Street Journal (notice that WSJ has a separate sub-domain for blogs at http://blogs.wsj.com/ to clearly separate authentic news stories from blog posts), I realize why NYT and WSJ are NYT and WSJ, and why CNET is CNET and perhaps will remain CNET.

It infuriates me how right now CNET News homepage is highlighting 15 stories in large font size, and out of those at least 8 are blog posts (most blog posts on CNET News look so identical to news stories that it's hard to decide what is what). Unless a clearly-visible banner is added to each blog post which indicates that this is a blog post and not a news story (along with cautionary implications of the same), such masquerading of blogs as news stories by CNET is tantamount to misinformation.

My 2 cents on the degrading quality of journalism in the age of the World Wide Web.

Update (18-12-08): A recent story on The Wall Street Journal claims that Google's recent actions are an indicator of a reversal of its previous stance on Net Neutrality. Once again, it's a confused, ignorant and misinformed journalist - making premature conclusions and judgments - to blame. With apparently no fundamental knowledge or understanding of computer science, computer networks, cache, content delivery network, and edge computing, the WSJ journalist is making hyperbolic claims which indicate his state of confusion, ignorance and misinformation. I completely agree with Google's visibly enraged response blasting this story on The Journal.

Sunday, October 05, 2008

Credibility Of OpenOffice.org 3.0 As An Alternative To Microsoft Office 2003 - My Transition Experiences (And More)

From a month or so I've been trying out the new OpenOffice.org 3.0 Release Candidate 1 (I've previously been using Microsoft Office- XP, & 2003- for well over 6 years).

Why am I trying out OpenOffice.org?

- To build an understanding of its capabilities, ease-of-use, quality & performance

- To find out the issues which are inhibiting its mainstream adoption

- To compare it to Microsoft Office & list out major positive & negative differences

- To find out if it can really be an alternative to Microsoft Office (This feasibility study is for both my personal use and for deciding whether OpenOffice.org is ready for adoption in SMBs/Enterprises)

Results of my month-long tryout:-

- OpenOffice.org is very suitable for my personal needs. It can fulfill all my 'creative' Office-Suite needs. By 'creative' I mean that OpenOffice.org is suitable for purposes of creating documents. It isn't the perfect solution for importing/opening documents in the Microsoft Office binary formats. The importing is buggy and just plain dissatisfactory, and leads to significant productivity loss in form of manual cleanup/editing required to restore the document's original form. However, broader adoption of Office Open XML and OpenDocument formats and improvements to OpenOffice.org's import filters for Office Open XML formats should considerably solve this issue.

- OpenOffice.org applications start-up considerably slower than Microsoft Office applications, and this is a significant issue. The effects of this performance lag can be imagined from the woes Web-browser users faced a few years back when Netscape and Mozilla browsers would start-up painfully slowly. Quick application launch is a mandatory requirement for good user experience, and OpenOffice.org needs to bridge this performance gap sooner rather than later.

- OpenOffice.org applications require considerably more system memory than Microsoft Office applications. Also, the responsiveness of user interface of OpenOffice.org applications is considerably less than that of Microsoft Office applications (Although in absolute terms it is above satisfactory). In summary, OpenOffice.org has performance issues that need to be addressed immediately. OpenOffice.org would benefit immensely by stringently following the Google User Experience Design principles.

- OpenOffice.org is a feature-rich, high-quality and easy-to-use suite of applications. It is close-enough to the ease-of-use of Microsoft Office 2003 applications for it to be declared fit for consumption by general public.

- Apart from the performance and file-format-compatibility issues, another significant issue inhibiting mainstream adoption of OpenOffice.org is the lack of awareness (among masses) about its existence, its quality and its suitability as an alternative to Microsoft Office. People just don't know that there exists an office-suite out there which is a credible alternative to the expensive Microsoft Office. How many of us, who are aware of OpenOffice.org, know that there exists an extension system for OpenOffice.org which is akin to Mozilla's Add-ons system? OpenOffice.org needs to learn from Mozilla Foundation and Mozilla Corporation to solve this issue.

- Finally, masses (and in this case SMBs and Enterprises as well) are unaware that use of OpenOffice.org in conjunction with the free (and official) Microsoft Office Viewers can be a largely complete and compromise-free combination for users whose needs revolve promarily around opening/viewing Microsoft Office documents obtained from third-parties and first-hand creation of their own documents.

In summary, OpenOffice.org 3.0 is a serious and credible challenger to Microsoft Office 2003. Version 3.0 is well ahead of its relatively unbaked predecessors, and minor annoyances apart, OpenOffice.org 3.0 promises to be the first credible challenger to Microsoft Office. Customers looking to save hundreds or thousands of dollars should adopt OpenOffice.org with open arms, if their specific needs are in line with those outlined in this post. Recommended for adoption by individuals, SOHO and SMBs, since cost of acquisition is a relatively more pressing factor for them than for cash-rich large enterprises which are not willing to make any compromises, whatever be the monetary cost.

Saturday, October 04, 2008

Google Is (Probably) Tampering With Its Search Results (Perhaps To Hurt Microsoft) - Intriguing Evidence

When I read about Microsoft's launch of the SearchPerks! program, I felt like reading about it on Wikipedia (I generally visit Wikipedia to read about something). I typed microsoft searchperks wikipedia into Google and got the top result pointing to the SearchPerks! webpage on Wikipedia.

Specifically, I reached this version of the article. Since at that time this version was the most recent version of the article, it was running as the main article. Once again feeling like puking about Microsoft's desperate actions, I decided to edit this article to give readers a

real perspective of this program.

So in the subsequent hours, I made multiple edits to the article which can be seen here, here, here, here, and here (these are in the order of older to newer). Note that I still believe that my perspective of this program (the way I presented it in Wikipedia) should be included in the Wikipedia article- it is something that deserves to be told to readers since it is true.

From time to time I would visit this webpage (always using Google to get me to the Wikipedia page) to check if someone added-to/edited/removed what I had added in the article. And every time Google would show the wikipedia article as the top search result, whether the query would be searchperks wikipedia, live searchperks wikipedia, windows live searchperk wikipedia or microsoft live searchperks wikipedia.

However, today when I wished to reach this page via Google, Google doesn't show link to the Wikipedia article any longer.

Following screenshots prove this:-

Also, a search conducted only on the Wikipedia in English domain returns zero results for the term searchperks:-

Finally, searching for the URL of the SearchPerks! program returns zero results:-

It seems that Google is manually tampering with its search results.

There is another interesting thing to observe. Look at this screenshot:-

It shows the search results page on Google for the query windows live searchperks wikipedia. Note that there are results from Wikipedia in the list of results returned by Google.

It shows the search results page on Google for the query windows live searchperks wikipedia. Note that there are results from Wikipedia in the list of results returned by Google.

However, when I click on More results from en.wikipedia.org link on the webpage, there are zero results, as visible in the screenshot below:-

This is awkward (and illogical) because if Google's algorithms return results from en.wikipedia.org on the main search results page for the query windows live searchperks wikipedia, then why aren't at least those same results returned when the same query is ran for the domain en.wikipedia.org?

This is awkward (and illogical) because if Google's algorithms return results from en.wikipedia.org on the main search results page for the query windows live searchperks wikipedia, then why aren't at least those same results returned when the same query is ran for the domain en.wikipedia.org?

There is another interesting thing to observe. Look at this screenshot:-

It shows the search results page on Google for the query windows live searchperks wikipedia. Note that there are results from Wikipedia in the list of results returned by Google.

It shows the search results page on Google for the query windows live searchperks wikipedia. Note that there are results from Wikipedia in the list of results returned by Google.However, when I click on More results from en.wikipedia.org link on the webpage, there are zero results, as visible in the screenshot below:-

This is awkward (and illogical) because if Google's algorithms return results from en.wikipedia.org on the main search results page for the query windows live searchperks wikipedia, then why aren't at least those same results returned when the same query is ran for the domain en.wikipedia.org?

This is awkward (and illogical) because if Google's algorithms return results from en.wikipedia.org on the main search results page for the query windows live searchperks wikipedia, then why aren't at least those same results returned when the same query is ran for the domain en.wikipedia.org?Thursday, October 02, 2008

My Concerns About The Proposed Google-Yahoo Search Advertising Deal (In Context Of AOL Search & Ask.com)

The current state of Web search engines is as follows:-

- Google: Search results = Google | Ads = Google

- Yahoo: Search results = Yahoo | Ads = Yahoo

- Live/MSN Search: Search results = Microsoft | Ads = Microsoft

- AOL: Search results = Google | Ads = Google

- Ask: Search results = Ask.com | Ads = Google / LookSmart

My present concerns are as follows:-

- Google already powers search results on 2 of the top 5 search engines, and ads on 3 of the top 5 search engines. In effect, although we have an impression that there are 'Five' distinct search engines, in reality we have only 4 search engines and only 3 mainstream search-ad engines. AOL and Ask.com nicely create an impression of prevailing competition in the search engine business, while hiding the fact that Google powers them in one way or the other.

- Far more important than the number of top search engines powered by Google's search results and Google's ads is Google's 'share' of search results and search ads. If both direct and indirect counts are made, Google is an unquestionable monopoly when it comes to search results and search ads.

- Google's share in the search engine market is growing relentlessly month-by-month, further choking the air supply of the few credible alternatives left and sending them into a downward spiral.

- Since search engine business is very capital intensive, it's almost impossible for any startup to compete with Google (and other top search engines). Look at Cuil and Wikia Search- both started off with lots of buzz and media coverage, and now have been relegated to the 'virtually non-existent' and 'insignificant' category. Their presence or absence doesn't matter.

- It is possible (and likely) that in the next 2 years, Google will have over 90% of search engine market share, a dangerous situation for the Web and for the search business.

- Ask.com is the leading underdog out of the top 5 search engines. Its search results page already seems to rely more heavily on Google-powered ads than on it's organic search results and embarrassingly, many times the ads are more relevant than the search results themselves. I have observed that Ask.com gives irrelevant results non-infrequently, and if it wishes to maintain or grow its market share, it must switch to search results of Yahoo. I believe that Yahoo-powered search results and Google/Yahoo-powered ads is a life-savior combination for Ask. Also, Ask should sell its search engine intellectual property (algorithms, engineers, patents, etc.) to Microsoft, as it's unlikely that Ask will be able to compete with the other search engines with its own search results. Finally, the user interface of Ask.com is cluttered, complex and slow, and Ask must revamp its user interface (especially the search results page) if it wishes to stop its audience from defecting to rivals.

The above list will look like the following if the Google-Yahoo deal does take place:-

- Google: Search results = Google | Ads = Google

- Yahoo: Search results = Yahoo | Ads = Yahoo & Google

- Live/MSN Search: Search results = Microsoft | Ads = Microsoft

- AOL: Search results = Google | Ads = Google

- Ask: Search results = Ask.com | Ads = Google / LookSmart

My additional concerns, if the deal does take place are as follows:-

- One out of the only 2 credible Google alternatives (Yahoo and Microsoft) will start to get dependent on Google. Increased cash flows because of Google ads will leave little incentive for Yahoo to innovate and improve its advertising technology. The deal is a poison pill for Yahoo, and although Yahoo contends that increased cash flows from the deal will allow it to make investments to improve its advertising technology, the will make Yahoo's advertising system less attractive for advertisers and Google's platform even more attractive, thus further decreasing the profitability of Yahoo from its own ads, and making it more dependent on Google ads (for revenue). The downward spiral may actually lead to a collapse of Yahoo's ad system.

In summary, I believe that this proposed deal should be blocked, so that Yahoo is forced to innovate and improve its own search engine and advertising network. This will be good for both Yahoo and the Web- in the long term.

Related Posts By Me:-

Wednesday, September 24, 2008

Missing Simplicity - 3 GiB Install of Windows XP

I was surprised, saddened and a little worried when I saw the properties of the Windows folder on my computer. Over 3 GiB just to install a non-stellar operating system? Where are we heading to? Perhaps 20 GiB and 40,000 files just for the install of Windows 7?

I wish to point out that a clean install of Windows XP takes much less space than this and has much less number of files as well. It's only after one installs all the updates, patches, etc. that the Windows directory becomes excessively bulky and cluttered (In fact the System 32 folder on my computer has so many files that even scrolling through it slows down tremendously as Windows Explorer relentlessly tries to render all the file icons).

Windows keeps saving backups of all the old files whenever I update a component, something I just don't want, but something Windows just doesn't allow me to choose. Please Microsoft, I just don't want to go back to IE 6 when I install IE 7, IE 7 when I install IE 8 Beta 1 and IE 8 Beta 1 when I install IE 8 Beta 2. Please don't save IE 6 and IE 7 when I install IE 8. I just don't want them back. I want a clean, light and fast system. So please, at least give me an option to not save anything that I don't want saved.

Missing those days when I used to play the 75 KiB Dave game on a 1 MiB MS-DOS install.

Seventy. Five. Kibibytes. Phew!

Friday, September 12, 2008

Lehman Brothers Holdings Inc. - Collapse of an Icon

It's a slightly emotional moment for me, as I keep track of the series of unfortunate developments taking place one after the other at one of the world's oldest, largest, most well known, most respected and most successful financial titans.

Lehman Brothers Holdings Inc., undoubtedly an icon, is on it's deathbed. I feel emotional about this because in a way, I associate myself with Lehman Brothers. In my final year at college, Lehman came to my college for campus recruitment. And I remember that, shamefully, I just missed it. Yes, I missed it for 2 reasons:

Lehman Brothers Holdings Inc., undoubtedly an icon, is on it's deathbed. I feel emotional about this because in a way, I associate myself with Lehman Brothers. In my final year at college, Lehman came to my college for campus recruitment. And I remember that, shamefully, I just missed it. Yes, I missed it for 2 reasons:

I remember feeling quite sad for many days when I came to know that Lehman came and went all of a sudden, unexpectedly, and I didn't even know this. And even to this day I never fail to narrate the story of Lehman to my friends.

So today, when I read about the impending demise of Lehman Brothers, I feel sad, the way Lehman employees are feeling sad.

Staff gathered in a meeting room at the Lehman Brothers office in Canary Wharf in London on Thursday.

Staff gathered in a meeting room at the Lehman Brothers office in Canary Wharf in London on Thursday.

Lehman Brothers Holdings Inc., undoubtedly an icon, is on it's deathbed. I feel emotional about this because in a way, I associate myself with Lehman Brothers. In my final year at college, Lehman came to my college for campus recruitment. And I remember that, shamefully, I just missed it. Yes, I missed it for 2 reasons:

Lehman Brothers Holdings Inc., undoubtedly an icon, is on it's deathbed. I feel emotional about this because in a way, I associate myself with Lehman Brothers. In my final year at college, Lehman came to my college for campus recruitment. And I remember that, shamefully, I just missed it. Yes, I missed it for 2 reasons:- I was at home when Lehman came, recruited people from my college and went back.

- My resume wasn't sent to Lehman by my college's Placement Cell (or so I've heard).

I remember feeling quite sad for many days when I came to know that Lehman came and went all of a sudden, unexpectedly, and I didn't even know this. And even to this day I never fail to narrate the story of Lehman to my friends.

So today, when I read about the impending demise of Lehman Brothers, I feel sad, the way Lehman employees are feeling sad.

Staff gathered in a meeting room at the Lehman Brothers office in Canary Wharf in London on Thursday.

Staff gathered in a meeting room at the Lehman Brothers office in Canary Wharf in London on Thursday.

Subscribe to:

Posts (Atom)